Grant writers are surrounded by data and pressure to prove impact. Yet proposals overloaded with statistics often underperform. This article explores the Data-Bias Trap and offers a practical framework to rebalance quantitative evidence with narrative context so proposals are both credible and compelling.

Why data alone is not winning more grants

Walk through any grant review panel and you will see proposals crammed with charts, percentages, and logic models. On paper, this looks like rigor. In practice, many of these data-heavy applications blur together, feel cold, and fail to convince reviewers that real change is happening or even possible.

This is not because data is unimportant. Funders need evidence of need, feasibility, and impact. The problem is how that evidence is selected, framed, and integrated into a persuasive case for support.

Three forces are converging:

- Internal pressure on leaders to be "data driven"

- External pressure from funders to demonstrate measurable impact

- A growing but misunderstood emphasis on trust-based, community-driven philanthropy.

The unintended result is the Data-Bias Trap – a pattern where organizations default to large scale, impersonal quantitative data and underuse narrative, qualitative, and contextual evidence. Proposals end up full of proof but short on persuasion.

In this article, we explore how the trap works, why it undermines proposals, and what leaders can do about it. We introduce a practical framework – the Context-Data Sliders – to help organizations match their narrative and evidence strategy to different funder profiles, and we close with concrete actions to redesign how your team uses data in grant writing.

The subtle symptoms inside a typical proposal

Data-bias rarely appears as outright misuse of statistics. It shows up as a series of subtle, familiar patterns across standard sections of a proposal.

- Need statements that feel abstract and cold: Many proposals open with a sweeping statistic: “In our state, 50,000 children are food insecure.” This sounds serious, but for a reviewer it feels distant. It asks the mind to process a large number instead of inviting the reader into the lived experience of one child or family.

- Program designs that read like engineering diagrams: To prove rigor, organizations invest heavily in logic models and linear theories of change. Activities flow into outputs, then into outcomes in neat boxes and arrows. The experienced reviewer knows that real social change is messier and more contingent than any single flowchart suggests.

- Evaluation plans that measure what is easy, not what matters: Output indicators like “number of workshops delivered” or “number of people served” dominate reporting sections. True outcomes – changes in behavior, capability, well being, or systems – are either weakly defined or missing altogether.

- Reporting that satisfies systems, not relationships: Grant reports are often packed with tables and metrics that few people read in depth. Internally, they are seen as compliance exercises. Externally, many funders quietly admit they lack the capacity or frameworks to fully use the data they collect.

These symptoms signal a deeper problem. They are not simply stylistic choices. They reflect the influence of well documented cognitive biases and a misunderstanding of what funders in a trust oriented philanthropic ecosystem actually value.

Cognitive shortcuts inside the writing process

Behind every data heavy proposal is a busy professional managing deadlines and competing priorities. Under pressure, our brains rely on shortcuts.

Cognitive shortcut 1: The availability heuristic

The availability heuristic leads us to overvalue information that is easy to recall or access. For a grant writer, the most “available” information is often large scale quantitative data – census tables, county health rankings, standardized test results. These are searchable, downloadable, and look objective.

Harder to access, but often far more persuasive, are qualitative data points such as client interviews, staff observations, beneficiary stories, or community listening sessions. Gathering this material takes time and collaboration. Under deadline, the path of least resistance wins. The proposal opens with what was easiest to find, not what would be most compelling.

Cognitive shortcut 2: Confirmation bias

Organizations tend to start from a favored solution – an existing program model, a planned expansion, a signature initiative. The implicit task then becomes “find data that proves this is the right answer.”

This confirmation bias leads writers to cherry pick statistics that support their program and quietly ignore data suggesting that the real barrier might lie elsewhere. For example, an organization might focus on test scores to justify tutoring, while underplaying evidence pointing to chronic absenteeism driven by transportation gaps or family instability. The proposal becomes a defense of internal assumptions rather than an honest analysis of community need.

Misguided objectivity and the McNamara Fallacy

The Data-Bias Trap is not only about shortcuts. It also reflects a deeper philosophical error about what counts as “real” evidence.

The McNamara Fallacy describes the pattern of relying solely on metrics that are easy to measure, then treating everything harder to quantify as irrelevant or non-existent. In grant writing, this shows up as:

- Prioritizing countable activities – workshops delivered, meals served, counseling hours provided.

- Downgrading intangible but critical factors – trust, community belonging, psychological safety, resilience.

- Assuming that if it is not in a spreadsheet, it is not a credible result.

This mentality feeds the “logic model trap” where linear diagrams are created retroactively to justify funding, rather than to guide real program learning. What looks like rigor is often rigidity.

Misreading funder expectations

Finally, many organizations overestimate how much funders actually want dense data and underestimate the value placed on trust and relationship.

- – Complex application forms and detailed reporting templates are interpreted as proof that funders demand exhaustive quantitative evidence.

- – In reality, many foundations acknowledge that they struggle to synthesize the data they collect and are actively moving toward simpler, learning oriented, trust based approaches.

- – Leading funders increasingly talk about partnership, flexible support, community voice, and streamlined reporting, yet many proposals still treat them as distant gatekeepers who must be impressed by volume of data.

- The Data-Bias Trap is therefore a strategic misalignment. Writers are optimizing for a perceived demand, not the funders’ actual evolving priorities.

The persuasion paradox: when numbers numb

Humans do not respond to numbers the way most grant writers assume. Two key psychological dynamics are at play.

The identifiable victim effect

People are more likely to feel empathy and take action when they are presented with a single identifiable person rather than a large group. A story about one parent who misses work because there is no nearby clinic drives more motivation than a statistic about thousands of residents without primary care access.

Stories make abstract problems concrete. They allow reviewers to imagine success through the eyes of one beneficiary before scaling up in their minds.

Psychic numbing

As the number of people affected by a problem grows, our emotional response often decreases. Very large numbers can make problems feel overwhelming and unsolvable. A reviewer reading that “120,000 students are behind in reading” may feel that any single grant is a drop in the ocean.

When proposals lead with big statistics and never translate them back into human scale, they unintentionally trigger psychic numbing. The data that was meant to create urgency ends up flattening it.

Outputs vs outcomes: measuring activity instead of change

Data-bias also distorts evaluation.

- – Outputs are the direct products of activities – classes delivered, participants reached, meals served. They are easier to count and report.

- – Outcomes are the changes that result – improved health, higher graduation rates, increased income stability, stronger civic engagement. They are harder to define and measure, but they are what funders ultimately care about.

When evaluation sections focus almost exclusively on outputs, they signal that the organization may not have a clear theory of impact or the capacity to assess real change. This undermines confidence, even if the rest of the proposal is strong.

The Context-Data Slider framework

Philanthropy as a high-context culture

Anthropology offers a useful lens. In low-context cultures, communication is explicit and literal. Detailed contracts and data heavy reports are used to create clarity and manage risk. In high-context cultures, meaning depends more on relationship, shared history, and implicit understanding.

Modern philanthropy, especially at the foundation and corporate level, increasingly behaves like a high-context culture. Words like partnership, trust, learning, and alignment signal that:

– Long term relationships matter more than single transactions.

– Informal conversations and site visits can shape decisions as much as formal metrics.

– Funders want to understand how organizations think, not just what they count.

By contrast, a purely data driven proposal is a low-context document. It assumes that “the numbers speak for themselves” and that more metrics will automatically increase credibility. In a high-context environment, this can come across as cold, defensive, or disconnected from community realities.

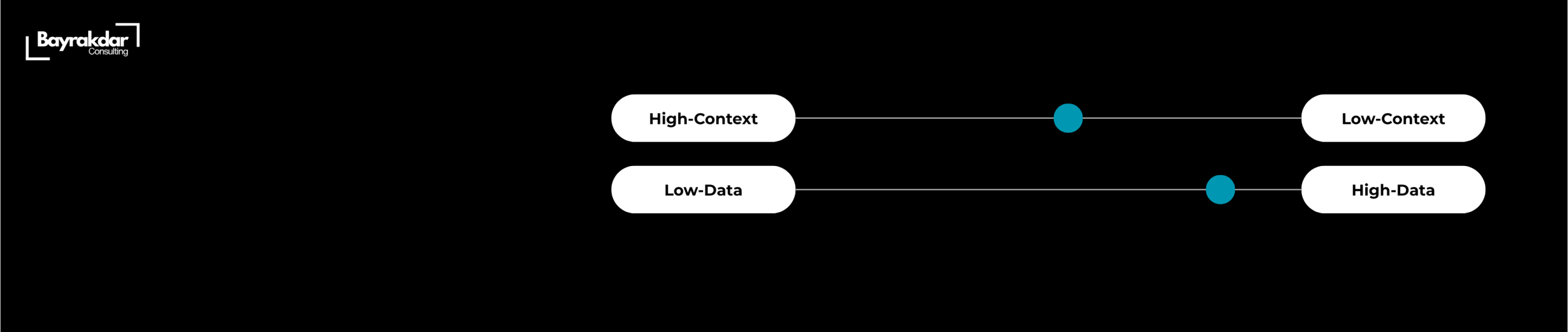

The Context-Data Sliders

To escape the Data-Bias Trap, organizations need to consciously balance narrative context and quantitative data based on the funder and relationship.

Think of two sliders:

– Slider 1: Level of context and narrative – stories, lived experience, community voice, qualitative insight.

– Slider 2: Level of data and technical detail – statistics, methods, models, evaluation frameworks.

Different proposals require different settings. A practical way to calibrate is to consider two dimensions:

1. Funder type

– Low-context funders: federal agencies, research councils, highly technical RFPs.

– High-context funders: family foundations, community foundations, corporate philanthropy with strong CSR narratives.

2. Relationship temperature

– Cold: first time application, blind review, no prior contact.

– Warm: existing relationship, renewals, invited proposals.

This produces four scenarios:

1. Low-context funder, cold relationship – High data, focused context

– Lead with rigor, feasibility, and alignment with formal criteria.

– Use narrative mainly to clarify significance and human impact of the data.

2. High-context funder, cold relationship – High context, moderate data

– Lead with story, community connection, and mission alignment.

– Use data to validate, not dominate, the case.

3.Low-context funder, warm relationship – High data, moderate context

– Program officer trusts the organization but needs strong data to make an internal case.

– Acknowledge shared history, then provide detailed evidence and a disciplined evaluation plan.

4. High-context funder, warm relationship – High context, lean data

– The relationship does much of the work.

– Focus on shared successes, adaptive learning, and community voice, with enough data to confirm ongoing effectiveness.

The key is that the sliders are set deliberately, not by habit or fear.

How the Data-Bias Trap shows up at portfolio level

For senior leaders, the Data-Bias Trap is not just a writing issue. It has strategic consequences across the organization:

– Portfolios may skew toward programs that are easiest to measure rather than those that are most transformative.

– Staff may become risk averse, favoring activities that produce quick, countable outputs over complex work on systems and power.

– Funders may see the organization as competent but uninspired – efficient at delivering services, less clear about long term change.

Scenario 1: Research heavy public grants

A health nonprofit applies to a national research council. Here, low-context expectations are explicit: reviewers want methodological detail, power calculations, and clear evaluation frameworks.

Implication:

– The data slider should be high.

– However, narrative is still critical to explain why the research question matters, how communities are involved, and how findings will be translated into practice.

Scenario 2: Local family foundation

The same organization approaches a local family foundation whose giving is shaped by values, relationships, and stories from the community.

Implication:

– The context slider should be high.

– The proposal should open with a vivid local example, show deep listening to community partners, and then bring in selected data to demonstrate credibility and scale.

Scenario 3: Multi year renewal with a corporate funder

Over several cycles, a corporate foundation has seen strong performance. The relationship is warm, but internal stakeholders still need to justify continued investment.

Implication:

– The proposal should celebrate shared achievements, bring forward beneficiary stories that align with the company’s brand and values, and present a concise, outcome focused dashboard of key metrics.

Across these scenarios, the balance shifts, but the underlying principle stays constant: evidence must serve a story about real people and real change, not replace it.

Data is proof. Story is persuasion. Leaders must orchestrate both.

The push for data in philanthropy was a necessary correction. It challenged feel good narratives and forced the sector to take results seriously. But in many organizations, the pendulum has swung too far.

The Data-Bias Trap emerges when numbers crowd out narrative, when outputs stand in for outcomes, and when proposals are crafted to impress an imagined data hungry gatekeeper rather than to engage a real, context sensitive partner.

Escaping this trap does not mean abandoning rigor. It means redefining rigor as the disciplined integration of quantitative evidence, qualitative insight, and cultural context. It means treating data as proof that makes a compelling story credible, not as a substitute for that story.

Leaders who adopt frameworks like the Context-Data Sliders, invest in human centered data practices, and deliberately rebalance narrative and numbers will not only write stronger proposals. They will also build more honest internal learning cultures and more authentic relationships with funders and communities alike.

In a philanthropic environment that increasingly prizes trust and alignment, those are the organizations that will secure not just more grants, but better, longer term, and more flexible support.